We’re going to cover how to use your UDM and connect to an AWS S2S VPN. This is mostly based off this excellent blog, but wanted to add a few more detail.

TLDR:

- Create a CloudWatch log group.

- Under VPC. Setup Customer Gateway. Use your public IP.

- Create Virtual private gateway. Attach it to your VPC.

- Edit VPC route propagation for your virtual private gateway.

- Create S2S VPN.

- Configure UDM Site-to-Site VPN

Login to your AWS account, in my case I am doing everything in us-east-1 and I will be using the default VPC. Go to VPC, customer gateways and adding your public IP in the IP address field.

Still in the VPC now go to virtual private gateways and create one. A new gateway will be created in the Detached state.

Select your gateway and go to Actions > Attach to VPC. Choose your VPC. Remember the default VPC has access to all AZ subnets by default. If you’re trying to limit access to subnet change this.

Now it’s time to create your VPN. In VPC go to Site-to-Site VPN connections. This part requires a few important items.

- The virtual private gateway from earlier.

- The customer gateway from earlier.

- Your home network’s network range. You can get this from your UDM by going to Networks. You must select static for this to work. If you do not select static your UDM tunnels will show as connected, but from the AWS side they will show down and routing will not work.

Under Tunnel options you’ll want to edit the options.

- The UDM doesn’t support the other Phase 1 encryption protocols so just select AES256.

- For Phase 2 choose 256, 384, and 512.

- IKE version is ikev2.

Finally, you’ll want to enable logs and choose your CloudWatch log group. Create the VPN connection.

I was surprised with how long this took. At worse it took over 10 minutes to finally go into available state. You will then see both tunnels in the down state.

Finally, download the configuration file, you’ll want to use generic for the vendor.

One last thing we want to do in AWS is modify our VPC route tables. In VPC, route tables, actions, Edit route propagation. Select your virtual private gateway and enable propagation.

Next, we move to the UDM. Under Settings, VPN, Site-to-Site VPN, Create new. From the configuration file you downloaded above you want to locate the Tunnel 1 pre-shared key:

IPSec Tunnel #1

===============================================

#1: Internet Key Exchange Configuration

…

– IKE version : IKEv2

– Authentication Method : Pre-Shared Key

– Pre-Shared Key : Hy1YkAa33asfj32as3f3nkLT5HVHqw

and the outside IP address for the virtual private gateway:

Outside IP Addresses:

…

– Virtual Private Gateway : 54.87.213.29

Configure your tunnel setting using your PSK and remote IP from the configuration. Select. For remote networks I chose the subnets where for the AZ’s I wanted routing to.

Next, go to Advanced and match my configuration. In my case I like to keep tunnel 1 with the DH Group 23 and tunnel 2 with DH Group 24. Ultimately, just make sure this matches your AWS tunnel configuration.

When you save the second tunnel and if you chose the same remote network subnets, you’ll get a warning about an overlap between both tunnels. Change your route distance to make one of the tunnels the fail over. Then save your second tunnel configuration.

After a few minutes you’ll see both tunnels come online from the UDM.

And from AWS.

If you take a look at the CW logs and see this, it means that the PSK was incorrect. Note you’ll have a log per tunnel and you can tell them apart by the public IP of the tunnel.

{ “event_timestamp”: 1741916140, “details”: “AWS tunnel detected a pre-shared key mismatch with cgw-063ce7f933f454158”, “dpd_enabled”: true, “nat_t_detected”: false, “ike_phase1_state”: “down”, “ike_phase2_state”: “down” }

This means that your connection is established.

{ “event_timestamp”: 1741943346, “details”: “received packet: from cgw-063ce7f933f454158 [UDP 4500] to 52.200.131.39 [UDP 4500] (80 bytes)”, “dpd_enabled”: true, “nat_t_detected”: true, “ike_phase1_state”: “established”, “ike_phase2_state”: “established” }

Finally, you can spin up an EC2 instance, with NO public IP. Select the subnet you configured in your tunnel. Once the instance is running ping it from your local computer via IP.

~david

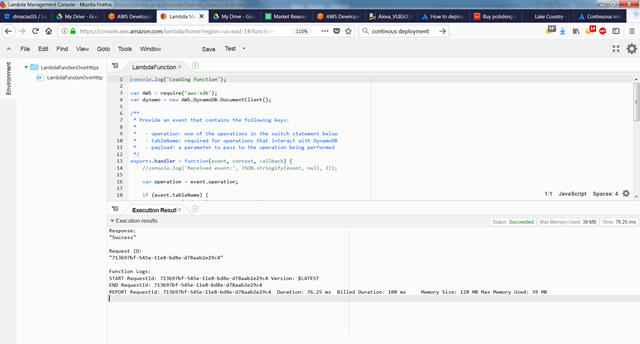

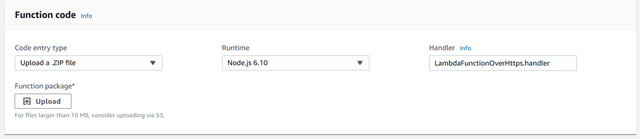

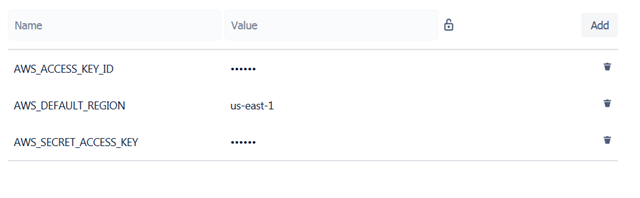

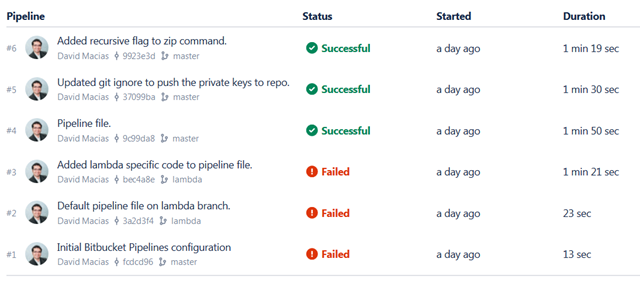

The last 3 commits were successfully built (sent to Lambda). You can click on the commit and see detailed information on the results of every command in your yml file. You’re done, you’ve developed some code locally, committed to git, and pushed it to Lambda all with a few clicks.

The last 3 commits were successfully built (sent to Lambda). You can click on the commit and see detailed information on the results of every command in your yml file. You’re done, you’ve developed some code locally, committed to git, and pushed it to Lambda all with a few clicks.

You must be logged in to post a comment.